| Back to Normal | |

| Camera: SONY DSC-RX100M7 | Date: 22-10-2025 15:14 | Resolution: 5322 x 3326 | ISO: 100 | Exp. bias: -0.7 EV | Exp. Time: 1/50s | Aperture: 5.0 | Focal Length: 12.3mm (~33.0mm) | |

Rescue, Don’t Replace

One of the things which attracted us to our house about 30 years ago was a great feature: what is known as a “Chinese Circle” in the courtyard end wall, which provides a view into, from and through the courtyard from both the house and the garden. We place sculptures so they are viewed through it, we light it at Christmas, it’s very much part of what makes our house.

Unfortunately a few years after we moved in, it became apparent that the original wall had not been built very strongly and was in some danger of collapse. Therefore about 25 years ago we had it rebuilt. We contracted a local builder who agreed a much stronger double-thickness structure, plus what we hoped were adequate, larger foundations.

While the wall itself was impressively strong, we’re on clay and over the years it became clear that with each cycle of wet and then hot weather the foundations were moving slightly. In recent years this accelerated, with the wall moving by several millimetres this summer and getting to the point where there was some risk of collapse. The wall itself was still stable and uncracked, it was just leaning into the garden, as a whole, by about 5°.

| The Leaning Wall of Effingham (Show Details) |

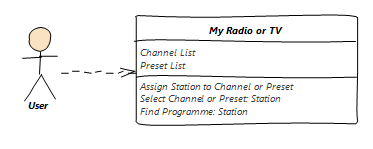

Knowing the wall was still stable I approached several subsidence specialists. They made it very clear they were not interested in such a small job, claiming that the simplest solution was to knock the wall down and rebuild it. We approached a reputable local builder who said very much the same thing: he was happy to provide a quote for rebuilding, at enormous cost, but when we pressed him for a quote to stabilise it he basically refused, by providing a quote effectively no different to the rebuild option.

Apart from the impact on our finances this just felt wrong and wasteful. Other things aside, a rebuild would require at least £1000 worth of new bricks, with the existing ones being disposed of as rubble. The wall was strong and undamaged apart from leaning. Even if it could not be fully righted, it would be acceptable to just stabilise and support it where it was. Why was no-one prepared to do that?

With another local builder Frances and I came up with the idea of creating two steel buttresses to stabilise the wall in place. We were quite keen on the option, but it became apparent that his steel fabricator was going to charge a fortune to make up the buttresses, and we’d pay a lot of money for an aesthetically questionable part solution.

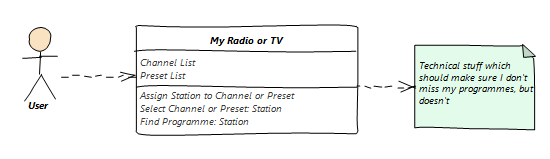

Just as we were in danger of exhausting the local directories, we were introduced to friend of a friend Tomasz and his team of Polish builders. Initially he wanted to quote for a rebuild, but when pressed he agreed that it should be possible to jack the wall back nearly vertical, and then underpin it. The quote to do so wasn’t much more than half the rebuild option, he was able to start almost immediately, and we almost bit his hand off.

On the anointed day up turned a team of Polish chaps with shovels and a tiny digger, who proceeded to dig two deep trenches either side of the wall. I was a little afraid that it would collapse during this process but with strategically placed props and wooden supports it they managed to keep it all in place.

The first attempt at jacking used two steel props with contact points halfway up the wall. This quickly reduced the lean angle by about half, but we were concerned that the wall might crack at its base if the wall moved and the foundation didn’t. We were prepared to stabilise the wall at the new position, but the Polish guys went back to digging and created a new structure in which two smaller jacks could be used to twist the foundation itself.

The next challenge was finding the right jacks. They had one small hydraulic jack, pretty good, and a bunch of modern car jacks which were clearly not going to work. However I rummaged in the back of my garage and found a bottle jack rescued from an old Ford Transit in the 1970s which turned out to be exactly the right piece of equipment. Twenty minutes of careful jacking on the foundation twisted it with the wall intact, and we had a perfectly straight wall again.

My faith in the strength of the wall was fully vindicated – it didn’t crack or warp at all.

The rest of the process was straightforward albeit physically hard work, progressively digging by hand and pouring extended foundations which were wider and deeper than the old one.

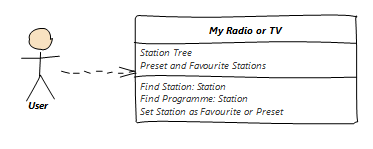

We decided to cap off the new structure by building two brick buttresses on the garden side of the wall. While not strictly required because the wall is still uncracked and now vertical they enhance the look of it and make sure if there is any cracking right at the base of the wall it won’t compromise the solution. Tomasz procured 100 matching bricks, and I was given the task of coming up with a design for their use in the buttresses. My design used 98 of them. We also needed to cap off the top bricks, the dimples of which would otherwise collect water. I came up with the solution of creating porcelain tile caps, and then impressed the guys by getting out my own electric tile cutter, and making the required caps from a single yellow floor tile left over from our 2006 bathroom refit.

| Brick Buttresses (Show Details) |

Overall the process took an average of two men just over a week. The bill was under 2/3 of the cheapest rebuild quote. The excavated clay had to be removed from site, but otherwise there was zero waste, apart from two spare bricks!

The guys tidied up and disappeared, making good so well you’d never know they’d been there. The next day we watched an episode of “Grand Designs” in which the house was pre-fabricated 200 miles away from the plot, and moved as completed modules which were craned onto waiting foundations. Although the process was relatively painless, it was enormously expensive, and there were a few points where the prefabricated structure had to be hacked about with axes and chisels to accommodate unexpected service positions, which just felt wrong. Essentially the structure didn’t allow adjustment.

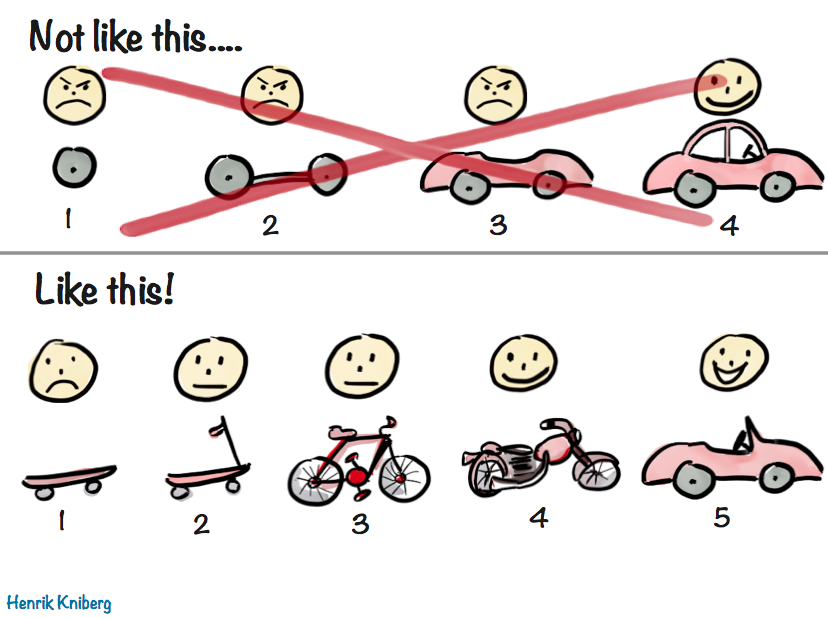

Good architecture should be accessible and adjustable. I’ve always believed in this for both the software I have designed in my professional career, and the hardware solutions I have developed for our living space. For example, I make sure that pipes and wires run in accessible spaces, and allow for change. However I’ve usually accepted that this might not be possible with the lower levels of physical architecture, the “Structure” layer of the Frank Duffy / Stewart Brand model.

Now I’m not so sure. We stuck to our guns, and we adjusted a brick wall!

With a big dziękuję to Tomasz, Rafal and Artur.

List

List Abstract

Abstract One+Abstract

One+Abstract

Thoughts on the World (Main Feed)

Thoughts on the World (Main Feed) Main feed (direct XML)

Main feed (direct XML)